Robotic and AI Food Lab

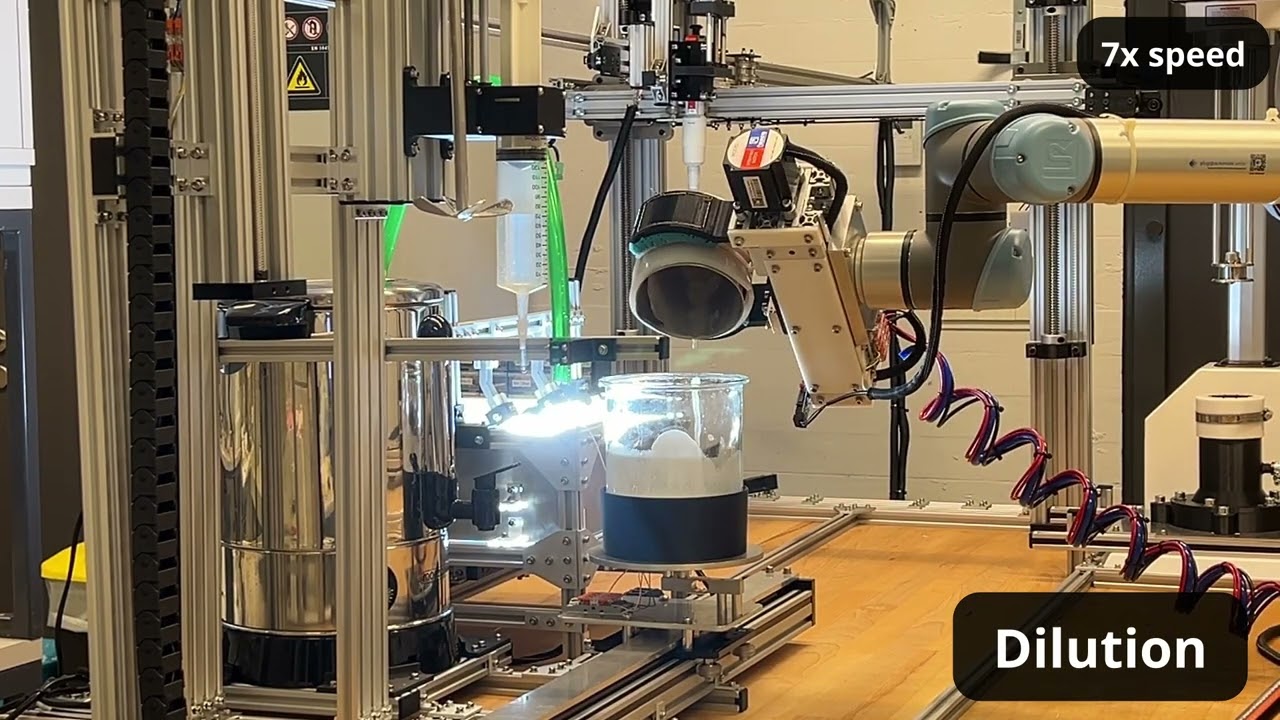

A robotic system that runs lab-scale food science experiments, and AI that learns from them to find optimal formulations. Built for food emulsions like plant-based milks and coffee creamers.

- Fully automated sample preparation from ingredient dosing to cleaning. Viscosity, pH, and stability measurements included.

- Bayesian Optimization pipeline identifies optimal formulations in as few as 20 trials.

- In active development at EPFL × Nestlé R&D. Building the AI agent that runs the lab and optimizes the beverage autonomously.

- 3,000+ custom parts and tens of thousands of lines of code.

Robotic Cooking and Food Modelling

A robotic system that autonomously cooks food products while capturing what's happening inside them in real time. The resulting datasets unlock prediction of cooking outcomes for untested formulations using foundation models.

- Cooks, flips, and measures simultaneously: temperature, moisture, electrical conductivity, color, pressing and cutting force

- Generates large-scale multi-modal datasets across 10+ product types, from plant-based burgers to tofu

- LLM-based predictive modeling of cooking outcomes, validated on prototype plant-based burgers

- 90% workflow automation, 24 experiments per 8-hour day, 3× more than manual

Emulsion Stability using Computer Vision

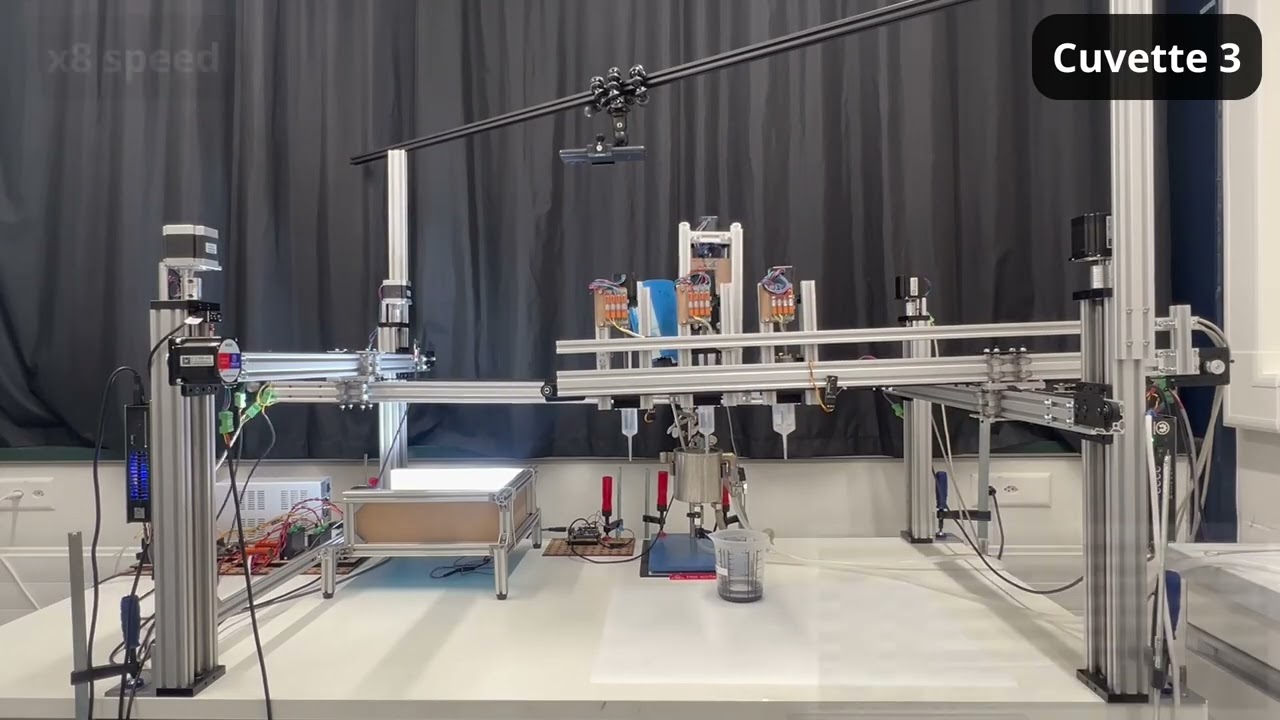

A robotic system that automates food emulsion stability testing. Performs autonomous titration and applies a proprietary computer vision algorithm to detect instability. Replaces a $120,000 lab instrument and manual visual inspection.

- Proprietary computer vision algorithm monitors up to 10 samples in parallel, continuously and in real time. Previously: 1 sample at a time.

- No pre-training required. Works on bright, dark, colored, and transparent emulsions. Just works.

- 8× more trials per day, 95% less manual work.

- Deployed and in active use at Nestlé R&D. Patent pending.

Autonomous Lab Inspection

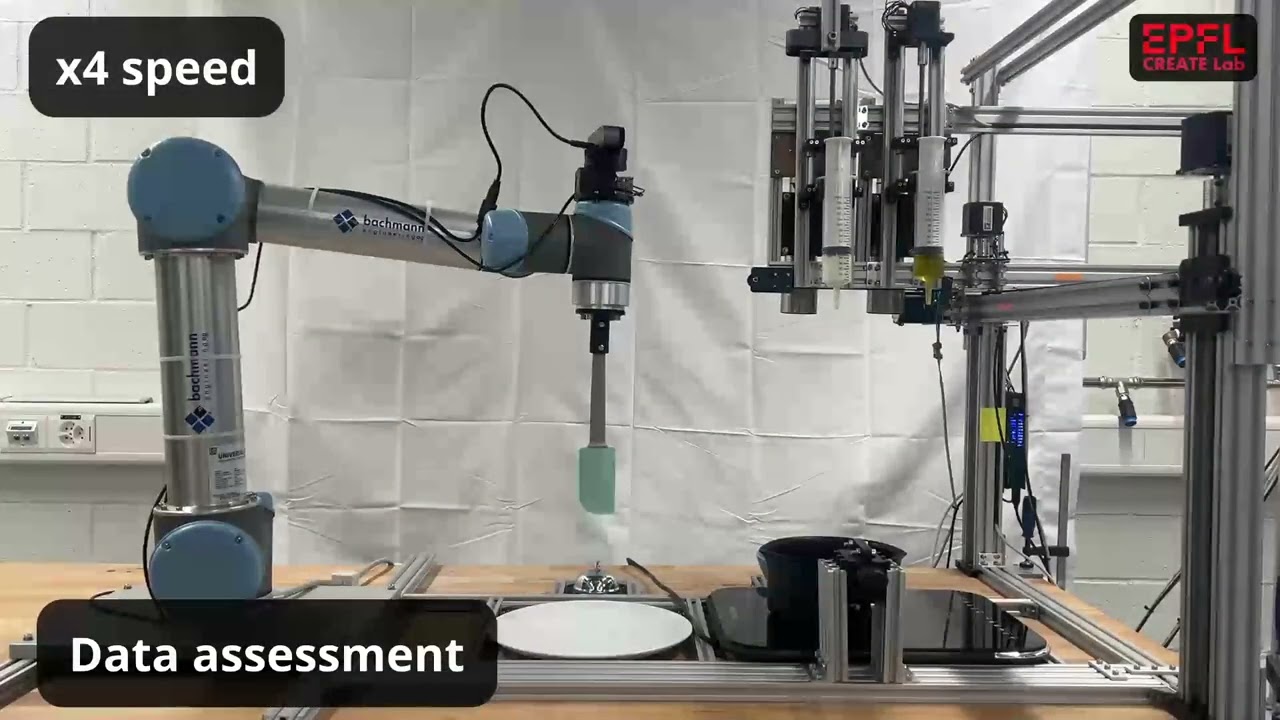

A robotic lab that autonomously inspects itself before an experiment. Detects failures, checks equipment, and decides whether to proceed using foundation models.

- Analyzes visual and audio data to assess equipment status and provide troubleshooting steps.

- Detects equipment motion, operational status, and failures using cameras, microphones, and large language models.

- Distinguishes between critical failures that stop the experiment and minor issues requiring human intervention.